There’s a new category of SaaS tool you’ve probably been pitched on recently: AI visibility tools. I know my inbox gets about 10 of these cold outreach emails every day.

These platforms promise to tell you how often your brand shows up when someone asks ChatGPT, Perplexity, or Google’s AI Mode a question relevant to your product. They’re also known as GEO tools (generative engine optimization), AEO tools (answer engine optimization), or LLM monitoring platforms.

Whatever you call them, they’re proliferating fast, they’re hungry for your business, and they’re not cheap.

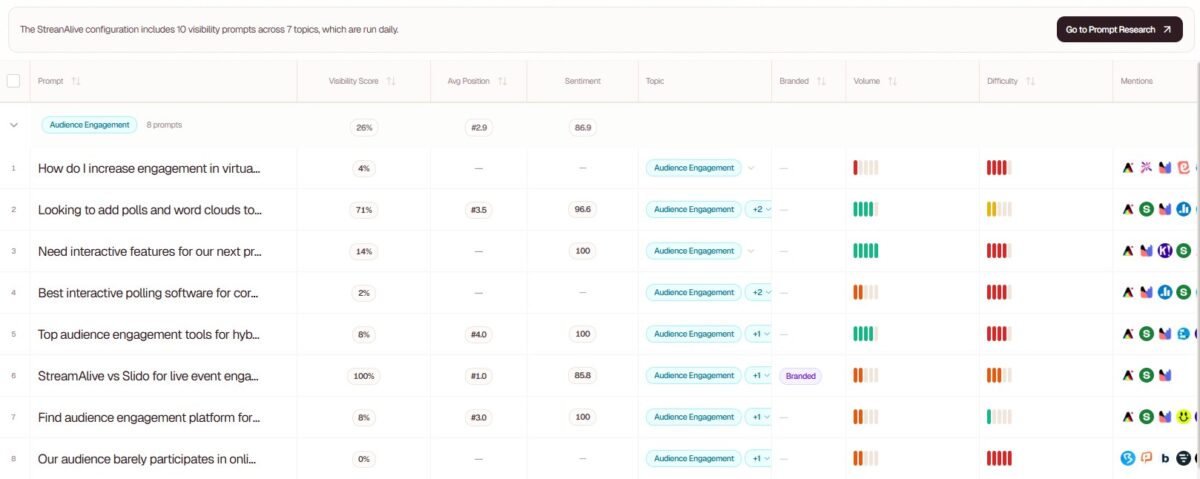

This post cuts through the marketing noise. I’ve looked at the main players, the pricing, the critical research on what these tools can and can’t reliably measure, and the emerging data on LLM traffic behaviour. I’ve also pulled in real conversion data from StreamAlive, where I’m the fractional CMO, that puts an interesting twist on why this channel matters more than its raw traffic numbers suggest.

Whether you’re a solo marketer, a B2B SaaS founder, or running a team evaluating whether to spend budget here, here’s what you actually need to know.

The Main Players and What They Cost

Here’s an honest breakdown of the tools most marketers are evaluating in 2026:

| Tool | Entry Price | Mid Tier | Prompts (Entry) | Best For |

|---|---|---|---|---|

| Peec AI | €85/month | €205/month | 50 prompts | Agencies, client reporting |

| Searchable | $50/month | $125/month | 50 prompts (ChatGPT only) | End-to-end optimization |

| Ubersuggest | $12/month or $120 lifetime | $20/month or $200 lifetime | 10 prompts/brand | Budget-conscious teams, all-in-one SEO |

| Otterly.AI | $29/month | $189/month | 15 prompts | Solo marketers, GEO audits |

| Semrush AI Toolkit | $199/month | Custom | 50 prompts | Teams already using Semrush |

| Profound AI | $499+/month | Custom | Custom | Enterprise, 10+ LLM coverage |

| Sources: vendor pricing pages, Visiblie, Kime.ai (April 2026) | ||||

What AI Visibility Tools Actually Do

The core function of these platforms is to run prompts through large language models (LLMs) at scale and record whether your brand appears in the responses. They do this repeatedly across multiple AI engines: ChatGPT, Perplexity, Claude, Gemini, Google AI Overviews. Then they aggregate the results into dashboards showing your “mention rate,” “share of voice,” and sometimes your “ranking position” in those responses.

Beyond monitoring, the more feature-heavy platforms layer in competitive benchmarking, citation analysis (which of your pages are being linked by AI systems), content recommendations for improving your AI visibility, and technical audits checking whether LLM bots like GPTBot can crawl your site.

The AI visibility tools category emerged in 2024-2025 as AI-powered search shifted from a niche experiment to a board-level priority. That pace of adoption explains why there are now dozens of tools jostling for position, and why the pricing across the category is all over the place.

The top four AI visibility tools to monitor LLMs

Searchable

Searchable is the most feature-complete option I’ve come across. It combines AI visibility monitoring, prompt intelligence, citation analytics, GA4/Search Console integration, technical LLM crawlability audits, and a content studio, all in one platform. Founded in 2025 in London, Searchable reports 12,000+ brands tracked and 45M+ citations analyzed. It raised approximately $4M in seed funding at a $40M valuation in December 2025.

For a platform like StreamAlive, Searchable is genuinely brilliant. The depth of data is unmatched at its price point. But that breadth is also the issue: you’re paying for a lot of functionality that most teams, particularly smaller marketing teams, won’t use. It’s the kind of tool that makes sense if you’re actively optimizing across all these dimensions. For pure monitoring, it’s overbuilt and overpriced for most.

Ubersuggest

Ubersuggest added AI visibility tracking as part of its broader SEO platform. The feature shows how your brand appears inside large language models such as ChatGPT and Gemini, how often it shows up, and how your visibility compares with competitors. It’s not the deepest AI visibility tool on the market, but it’s bundled into an all-in-one SEO platform at a price point that’s genuinely disruptive.

The standout is the lifetime deal. The Individual plan costs $12/month or $120 as a lifetime payment, and the Business plan runs $20/month or $200 lifetime. For a solo marketer or small business that also needs keyword research and rank tracking, this is hard to argue with. You’re essentially getting AI visibility monitoring as a bonus feature attached to a capable SEO tool you’d likely need anyway.

Peec AI

Peec AI positions itself as an analytics platform for marketing teams, tracking brand visibility, position, and sentiment across AI search engines. It integrates with Looker Studio for client reporting and MCP/API access for developers. Founded in February 2025 in Berlin, Peec AI reports $4M+ ARR and tracks 1,300+ brands. All plans include unlimited user seats and daily AI visibility tracking. It’s well-regarded for its simplicity and focus, which is a genuine differentiator in a market full of bloated platforms.

Otterly

Otterly is worth mentioning for its entry price and GEO audit depth. At $29/month for the Lite plan, it’s the cheapest published price in the category. Otterly.AI was recognized as a Cool Vendor in the 2025 Gartner Cool Vendors for AI in Marketing. It covers ChatGPT, Google AI Overviews, Perplexity, and Copilot on base plans, and runs GEO audits covering 25+ on-page factors.

The limitation is prompt volume – 15 prompts on the Lite tier is thin if you’re tracking a competitive market with multiple keywords and competitors.

The Pricing Problem

The industry average cost across AI visibility tools is around $337/month, according to my analysis of 27 platforms, and that’s before you consider the prompt-based model most tools use. Run out of prompts and you’re either capped until the month resets or paying for extras. Some enterprise tools are very expensive, and the more you dig into multi-domain management, multiple AI engines, and frequent refresh rates, the costs compound fast.

This is the fundamental tension in the category: you need to run prompts repeatedly to get statistically meaningful data, but most tools price by the prompt.

The Study That Should Make You Rethink “AI Rankings”

Before you spend anything on AI visibility tracking, there’s a piece of research you need to read.

In late 2025, SparkToro’s Rand Fishkin partnered with Patrick O’Donnell from Gumshoe.ai to run the most rigorous public study on AI recommendation consistency. The research involved 600 volunteers running 12 different prompts through ChatGPT, Claude, and Google AI a combined 2,961 times over November and December 2025. Each prompt ran 60-100 times per platform to generate statistically meaningful sample sizes.

The findings are eye-opening.

There is a less than 1-in-100 chance that ChatGPT or Google’s AI will give you the same list of brands in any two responses when asked 100 times. When it comes to ordering, AI tool responses are so random that it’s closer to 1 in 1,000 runs before you’d see two lists in the same order.

Fishkin’s conclusion was direct: “Any tool that gives a ‘ranking position in AI’ is full of baloney.”

This has real implications for how you evaluate the tools above. Any tool claiming to track where your brand ranks in AI recommendation lists is providing essentially random data points that shift with every query. The underlying variability makes position tracking statistically meaningless regardless of how sophisticated the tracking methodology appears.

What does survive statistical scrutiny? Visibility percentage across many runs of similar prompts, ie. how often your brand appears across a large sample, not where it appears in a single response. This is an important distinction. Tools that aggregate mention rate across many prompt runs are giving you something real. Tools that report your “position 3 in ChatGPT” for a given keyword are not.

The research also revealed that response variability correlates strongly with category breadth. In narrow categories with limited options, top brands appeared in most responses with relatively high consistency. In broader categories, the results scattered dramatically because the AI systems simply have more options to choose from.

Before purchasing any AI visibility tool, ask the vendor to show you their methodology. How many prompt runs do they use per query? Do they report mention rate or position data? Fishkin put it simply: “Before you spend a dime tracking AI visibility, make sure your provider answers the questions we’ve surfaced here and shows their math.”

Source: SparkToro / Gumshoe.ai — 2,961 prompt runs across ChatGPT, Claude, Google AI (Nov-Dec 2025)

The Reality of LLM Traffic Volume

So why bother tracking AI visibility at all if the data is noisy? Because even imperfect tracking of a growing channel matters, you just need to be clear-eyed about the current volumes.

The honest picture: LLM traffic is still small. Very small.

Across all analyzed sites in the Previsible State of AI Discovery Report which covered 1.96 million LLM-driven sessions across 12 months, AI represents just 0.13% of traffic, roughly 1 in every 769 sessions.

Other datasets are slightly more generous. LLM referral traffic accounts for less than 2% of total referral traffic on average, according to a 13-month dataset from Search Engine Land’s analysis of its customer base. A separate analysis by Conductor across 13,770 domains and 3.3 billion sessions puts AI referral traffic at 1.08% of all website traffic, with 87.4% originating from ChatGPT alone.

At StreamAlive, we’re tracking around 4% of traffic from LLM sources which is above average for the category. This is likely because the product is in an active buying category where people ask AI for tool recommendations, but also due to the amount of work we’ve done on increasing our visibility in the LLMs.

The click-through rate problem makes these numbers even smaller than they appear. 60% of searches in traditional search engines now end without a click due to AI summaries, and click-through rates drop from 15% to 8% when an AI Overview is present, according to Pew Research Center data. Within AI Overviews themselves, only 1% of searches lead to users clicking a link inside an AI Overview. LLMs are designed to retain users, not route them. The traffic that does escape is earned, not accidental.

Sources: Previsible (0.13%), Search Engine Land (under 2%), Conductor (1.08%)

However, the volume story is only part of the picture. And it’s the less interesting part.

Why LLM Conversion Rates Change the Calculation

Here’s where the data gets genuinely interesting, and where I’ll add some context from our own experience at StreamAlive.

LLM visitors convert at dramatically higher rates than organic search visitors across every study that’s looked at this.

LLM traffic has higher conversion rates than organic search traffic across the board: ChatGPT converts at 15.9%, Perplexity at 10.5%, Claude at 5%, and Gemini at 3%, compared to Google’s organic conversion rate of 1.76%, according to Seer Interactive data from June 2025. The average LLM visitor is worth 4.4 times more than the average traditional organic search visitor based on conversion rates, according to Semrush’s AI search study.

Microsoft Clarity, analyzing over 1,200 publisher and news sites, found that traffic from AI-driven platforms converted at rates traditional channels couldn’t match, with referrals from Copilot converting at 17 times the rate of direct traffic and 15 times the rate of search traffic.

Source: Seer Interactive, June 2025

At StreamAlive, we’re seeing conversion rates from LLM-referred traffic in the 15-20% range. That’s roughly 2x our organic search conversion rate. The explanation isn’t complicated: these visitors have already been pre-sold.

Think about how high-intent referral traffic works. If someone reads an independent “8 best webinar tools for 2026” article and clicks through to StreamAlive, they’re not at the top of the funnel anymore.

They’ve done their research, read a comparison, and are actively evaluating. The LLM experience mirrors this exactly. Someone asks ChatGPT “what’s the best tool for live audience engagement?” and gets a response that includes a recommendation for StreamAlive, often with a brief explanation of why.

By the time they click through, they understand the product category, have formed a view of the options, and arrive with specific intent. That’s the same conversion logic as high-quality curated list traffic but it’s AI doing the curation.

Across accounts analyzed by HockeyStack, 86% of hand-raisers from LLM traffic were classified as high-intent, often requesting demos or direct contact. That aligns with what we’re seeing. The traffic is small but it’s concentrated at the bottom of the funnel.

LLM referral traffic is growing fast: comparing the first half of 2025 to the second half, an average growth rate of 80% in LLM referral traffic was observed, with some companies experiencing 300% increases. The channel is small now but the trajectory is clear.

So Are AI Visibility Tools Worth Buying?

This is the question everything points back to. The answer depends on what you’re buying them for.

If you’re buying them to track your “ranking position” in AI responses, the SparkToro research is fairly damning. That metric is not reliable data. Any tool that sells you on it is selling you noise.

If you’re buying them to understand your broad visibility such as how often your brand surfaces across a meaningful sample of relevant prompts then that’s a real and useful metric. The key is prompt volume. A tool running 10-15 prompts per keyword per month isn’t giving you statistically robust data. The SparkToro research suggests you need 60-100 runs per prompt to start seeing patterns that mean something.

If you’re buying them to diagnose content and technical gaps such as which pages are getting cited, which aren’t, whether LLM bots can crawl your site, what your competitors are cited for that you’re not then that’s where the more expensive all-in-one platforms genuinely earn their cost.

The budget calculus breaks down like this:

- Solo marketer or early-stage startup: Ubersuggest‘s lifetime deal at $120-200 is the obvious starting point. It bundles AI visibility alongside a full SEO toolset. The AI monitoring features are less deep than dedicated platforms, but for a single brand at low budget, you’re getting meaningful directional data.

- B2B SaaS marketing team, 1-5 people: Otterly’s Standard plan at $189/month or Peec’s Starter at €85/month make sense. Both give you enough prompt volume and model coverage to track a competitive landscape meaningfully. Peec is particularly clean for teams that want data without feature overload.

- Growth-stage company or agency: Searchable at $125-400/month is worth serious consideration if you’re going to use the content studio and technical audit features. If you’re only monitoring, it’s more than you need.

- Enterprise: Profound covers up to 10 LLMs with custom prompt volumes and demographic filters. The pricing starts at $499/month and gets worse from there.

One option worth considering before buying anything: do six months of manual tracking first. Set up a simple spreadsheet, run your 10-15 most important prompts through ChatGPT, Perplexity, and Google AI Overviews weekly, and log your mention rate. It’s time-consuming but it gives you a baseline before you commit to platform spend. It also gives you a benchmark to measure a tool’s data against.

The Bottom Line on AI Visibility as a Channel

AI visibility tools are a legitimate product category solving a real problem. LLM traffic is small but it’s high-intent, it converts well, and it’s growing fast. Understanding whether your brand is visible in the answers people are getting matters.

But the SparkToro research is a necessary corrective to the hype. AI tools almost never produce the same recommendation list twice, and any tool claiming to track where your brand ranks in AI recommendation lists is providing essentially random data points that shift with every query. Buy for mention rate and citation analysis. Don’t buy for rank position data.

The pricing in this category is genuinely high for what you get at most tiers. If you’re evaluating tools, push vendors on their prompt methodology, model coverage, and refresh frequency before you commit. The industry average of $337/month is a lot of budget to spend on directional data.

That said, if your LLM traffic is converting at anywhere near the rates the data suggests it should – and at StreamAlive, it is – then even modest gains in AI visibility have an outsized revenue impact. A channel that sends you 2-4% of traffic but converts at 15% is more valuable than a channel that sends you 20% of traffic at 2%. That’s the case for investing in AI visibility now, even when the tracking tools are still maturing.

Here are the practical takeaways:

- Prioritize mention rate over rank position data — rank position in AI responses is statistically unreliable

- Run enough prompts per query to get meaningful data — 60-100 runs per prompt, not 10-15

- Before buying a platform, audit LLM traffic in GA4 first to understand your current baseline

- Treat LLM-referred traffic as high-intent bottom-of-funnel traffic, not top-of-funnel volume

- Match tool complexity to actual usage — Ubersuggest’s lifetime deal covers the basics; the enterprise platforms are only worth it if you’ll use the optimization features

- Watch conversion rates from this channel carefully: the pre-sell effect is real and significant

The tools will get better. The traffic will grow. Getting the tracking foundations right now, with clear eyes about what the data can and can’t tell you, puts you ahead of most competitors who are either ignoring AI visibility or over-investing based on metrics that don’t hold up.